Exploring the Consequences of Type I and Type II Errors in Statistical Hypothesis Testing

I build, write and explore around AI, data, and technology. Sometimes it’s experiments, sometimes it’s just reflections. All of it helps me learn.

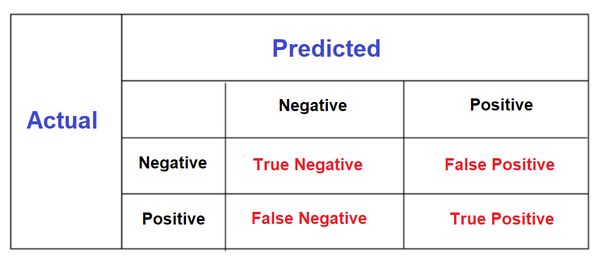

In statistical hypothesis testing or generally in the test based on statistics, we have these terms namely True Positive, True Negative False Negative, False Positive. and the literal meaning of True is that what is predicted correctly by the test, and False is something where our test did mistakes.

The above Image will certainly help us to understand what exactly is happening with true and false in the actual vs predicted table.

We want more True Positive and True Negative because these are the results that are correct in the actual scenario as well as in the test result.

Similarly, we want to avoid False Negative and False Positive, because these are the false results given by our test where actual and test results don't match.

Still, any test is not 100% accurate, so we don't have the choice to avoid both of them, but we can certainly reduce the occurrences of these errors.

More technically, these are referred to as Type I and Type II Errors.

We commit a Type I Error when we reject the null hypothesis when, in fact, it was true.

A Type II Error occurs when we accept the null hypothesis when, in fact, it was false.

False Positive = Type I Error

False Negative = Type II Error

While a curious question might be...

Which error would you say is more serious?

Well, everything is not so black and white when it comes to this concept it may depend upon the situations where these concepts get implemented.

For example, When you take a pregnancy test, you have your null hypothesis:

“I am not pregnant”

Rejecting the hypothesis gives you a ‘+’ Congratulations! You are pregnant! Accepting the hypothesis gives you a ‘–‘ Sorry, better luck next time!

Although tests can malfunction, and false positives do occur; In this case, a false positive would be that little ‘+’ when you are, in fact not pregnant. A false negative, of course, would be the ‘–‘ when you’ve got a little baby growing inside you.

Imagine Someone has been trying for a child for a long time then by some miracle their pregnancy test comes back positive. They mentally prepare themselves for having a baby and after a short period of ecstasy, in some manner, they find out that they are, in fact, not pregnant!

This is a terrible outcome!

Similarly, A false negative for someone who really does not want a child, is not ready for one and when assuring themselves with a negative result, proceeds to drink and smoke can be incredibly damaging for her, her family, and her baby.

So, it is quite challenging to generalize which is the worst error.

- Note: However, Pregnancy tests are improved a lot and there are minimum chances of a false negative.

As you can see from the example above, each error has its own set of issues depending on the problem. There is no worst error to commit because each problem brings its own set of complications to the table. Errors will happen throughout experiments. It is up to the project designer, test maker, or data scientist to determine which error needs to be reduced the most.

Because each problem has its own context and obstacles, you will need to take them all into consideration when designing your experiments and projects to know which error is the worst one to commit.

Similar cases were reported while COVID tests there's one of the insightful articles from BBC, where false positive is discussed,

You may find many more examples where these concepts are discussed and I feel it is one of the fascinating concepts of statistics.

That's all for this week, something interesting next week, and you can always connect with me Here 😃